Today’s alarmist without much research news is “New Web Code Draws Concern Over Risks to Privacy” about HTML5 and its threat to privacy. How evil of HTML5 and its creators.

The Real Deal

Persistent cookies are nothing new. Essentially the strategy works like this: Store data everywhere you can on the users footprint, and if data it deleted in a few locations, you copy it back from another location the next time you can. It’s regenerative by design. A popular example is evercookie which uses:

- Standard HTTP Cookies

- Local Shared Objects (Flash Cookies)

- Storing cookies in RGB values of auto-generated, force-cached PNGs using HTML5 Canvas tag to read pixels (cookies) back out

- Storing cookies in and reading out Web History

- Storing cookies in HTTP ETags

- Internet Explorer userData storage

- HTML5 Session Storage

- HTML5 Local Storage

- HTML5 Global Storage

- HTML5 Database Storage via SQLite

Note that several of these aren’t HTML5 specific. More than one of which isn’t cleared by just “erasing cookies”.

HTML5 does add a few new possibilities, but they are also by design as easy to control, monitor and restrict as your browser (or third-party add-on) will allow. HTML5 storage mechanisms are bound to the host that created them making them easy to search/sift/manage as HTTP cookies. Much worse are some of the more obscure cookie methods (Flash Cookies, various history hacks). They don’t really provide any more of a privacy risk than what the browser already has been offering for the past decade.

To Shut Up The Geolocaiton Conspiracy Theorists

Before someone even attempts the “Geolocation API lets advertisers know my location” myth, lets get this out of the way. The specification explicitly states:

User agents must not send location information to Web sites without the express permission of the user. User agents must acquire permission through a user interface, unless they have prearranged trust relationships with users, as described below. The user interface must include the URI of the document origin [DOCUMENTORIGIN]. Those permissions that are acquired through the user interface and that are preserved beyond the current browsing session (i.e. beyond the time when the browsing context [BROWSINGCONTEXT] is navigated to another URL) must be revocable and user agents must respect revoked permissions.

Some user agents will have prearranged trust relationships that do not require such user interfaces. For example, while a Web browser will present a user interface when a Web site performs a geolocation request, a VOIP telephone may not present any user interface when using location information to perform an E911 function.

To my knowledge no user agent implements Geolocation without complying with these specifications. None.

No HTML5 Needed For Fingerprinting

Even if you do manage to wipe all the above storage locations, you’re still not untraceable. Browser fingerprinting is the idea that just your system configuration makes you unique enough to be traceable. This includes things like your browser version, platform, flash version, and various other bits of data plugins may additionally leak. The EFF recently did a rather impressive study to learn about the accuracy of this technique. Computers with Flash and Java installed sport 18.8 bits of entropy and result in 94.2% of browsers being unique in the EFF study [cite, pdf]. Of course their data was likely skewing towards more experienced web users who are more likely to have an assortment of customizations to their computer (specific plugins, more variety in web browsers, operating systems, fonts) than the average internet user. I’d wager that their data downplays the effectiveness of this technique.

The idea that HTML5 is a privacy risk is FUD. It doesn’t provide any worse security than anything else already out there. It’s actually easier to counteract than what’s already being used since it’s handled by the browser.

The Future

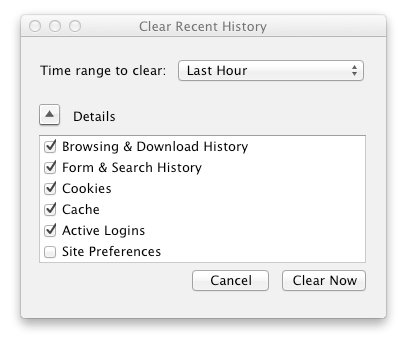

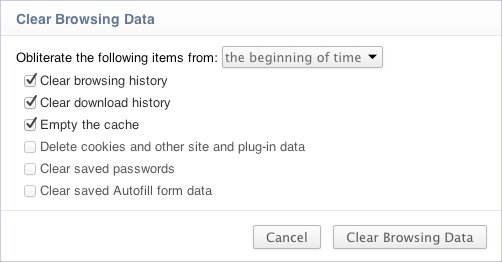

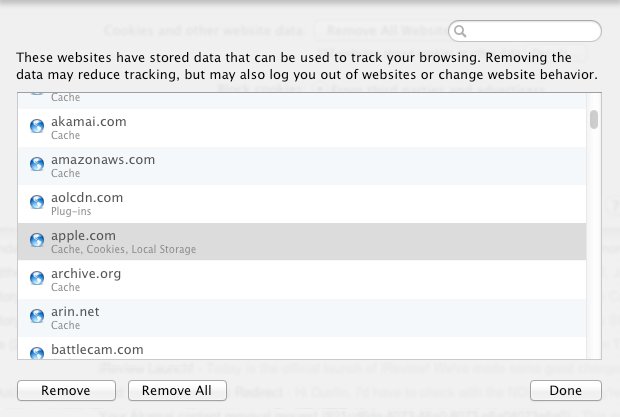

I still believe all browsers out there can do a much better job of protecting privacy when it comes to local data storage for the purpose of tracking. What I believe what needs to happen is web browsers need to start moving away from the “cookie manager” interfaces that are now a decade+ old and move towards a “my data management” interface that lets users view and delete more than just cookies. It needs to encompass all the storage methods listed above as supported by the browser. Hooks should also exist so that plug-ins that have data storage (like Flash) can also be dealt with using the same UI.

Additionally it needs to be possible to control retention policies per website. For example I should be able to let Google storage persist indefinitely, Facebook for 2 weeks, and Yahoo for the length of my browser session should I wish.

My personal preference would be for a website to denote the longest storage time for any object on a webpage in the UI. Clicking on it would give a breakdown of all hostnames that makeup the page, what they are storing and let the user select their own policy. With 2 clicks I could then control my privacy on a granular level. For example visiting SafePasswd.com would give me a [6] in the UI. Clicking would show me a panel this:

+------------------------------------------------------------------------------+

| My Data Settings for SafePasswd.com: |

| |

| Host Longest Requested Lifespan Your Choice |

| |

| *safepasswd.com 2 years [site default] |

| googleads.g.doubleclick.net 6 years [browser session] |

| |

| |

| (Done) (Cancel) |

+------------------------------------------------------------------------------+

I could then override googleads.g.doubleclick.net to be for the browser session via the drop down if that’s what I wanted. I could optionally forbid it from saving anything if that’s what I wanted. I could optionally click-through for more detail or view the data to help me make my decision. Perhaps this would also be a good place for P3P like data to be available. One of the notable failures of P3P that impeded usage was it was never easy to view so it never caught on.

The browser would then remember I forbid googleads.g.doubleclick.net from storing data beyond my browser session. This would apply to googleads.g.doubleclick.net regardless of what website it was used on.

This model works better than the “click to confirm cookie” model that only a handful of people on earth ever had the patience for. It provides easy access to control and view information with minimal click-throughs.

It also makes a web page much more transparent to an end-user who could then easily see who they are interacting with when they visit one webpage with several ads, widgets, social media integration points etc.

One click to view data policies, two clicks to customize, three to save.

HTML5 is not a risk here. The web moving to HTML5 is like going from the lawless land to a civilized society where structure and order rule.